Polarization seems to play an underestimated role in the psychological processes underlying cluster B personality traits, such as narcissism and psychopathy, and also appears to promote delusional behavior. It even encourages normal people to behave narcissistic, psychopathic, and delusional. Those who face mental illness may be particularly sensitive to polarizing or have happened upon environmental contexts that promote polarization dynamics. A polarization proneness trait is proposed. Our society seems to be losing its’ cohesive structure as polarization increases. A series of tips are presented to help you depolarize yourself and others.

First, let’s cover polarization:

Research indicates that cognitive dissonance invokes motivated reasoning and ultimately drives us to use confirmation bias, in order to reduce the dissonance. Scientists have suggested that giving people motivation, either rewarding or aversive, induces motivated reasoning, which has distinct mechanisms that are separate from either cold reasoning or emotional regulation (Taber & Lodge 2006). When we are rewarded for believing in some idea, we are given a motivation to confirm this belief. In contrast, when the belief in an idea is aversive, this provides us with a motivation to reject the belief.

Similarly, dissonance theory suggests that when ideas that contradict our position are presented to us, we experience dissonance, which is, in essence, an aversive experience (Harmon-Jones & Mills 2019). The research suggests that during cognitive dissonance, people seek to reduce the stressful contradiction that they are faced with, which often involves changing beliefs or becoming biased to confirm the desirable belief. This suggests that cognitive dissonance provides an incentive for motivated reasoning by providing an aversion to holding contradictory beliefs.

Other research has found that prior attitudes remain ingrained even when presented contradicting evidence (Westen et al 2006). In this study by Westen et al, when subjects were presented with information that contradicted their position, they proceeded to engage in confirmation bias, presumably due to the stress of the contradiction they faced, as dissonance theory would suggest.

Polarization is essentially the dynamic in which people are faced with the cognitive dissonance that arises because of the different beliefs each person holds. You could imagine that this occurs in people on the political left and right, with people who are religious or atheist, and so on. This cognitive dissonance pressures people to reject their opposition, which eventually further ingrains both sides in their own positions.

So how does this tie together with cluster B?

Narcissism

One of the cluster B behavior sets is what we commonly refer to as narcissism. I suspect most of you already know about narcissism, but in brief, people who are narcissistic are ofte seen as arrogant, selfish, manipulative, charming, and unempathetic. In the past, I’ve argued that the behaviors underlying narcissistic traits are actually normal (AntiNarcissism; The Arbiter of Truth). Specifically, these are behaviors one is likely to engage in when they are threatened by other people. As an example, if someone calls you stupid, you are likely to lose empathy for that person and maybe even your patience. You might want to call them stupid back, which is quite arrogant if you did so.

What makes narcissism different than this circumstantial situation I’ve just outlined, is that people labeled narcissistic have likely been chronically involved in such circumstances or even traumatized by them. For example, if one was abused and told that they are stupid, they may become insecure about their intelligence in the long-term. This may lead the person to brag about their intelligence or try to prove to others that they are smart. They may also use the strategy of belittling other people’s intelligence when they feel threatened. In some sense, narcissism can be viewed as a kind of PTSD that is specific to rejection. A kind of post-rejection stress disorder. There may be other pathways to the narcissism behavior, but this is likely a popular one. In support of this, an association has been found between childhood abuse and narcissistic vulnerability (Keene & Epps 2016). Another study found that verbal abuse during childhood has been linked to increased rates of developing narcissism and other personality disorders (Johnson et al 2001).

So how does polarization relate?

One of the largest personality correlates with narcissism is low agreeability which is also sometimes referred to as trait antagonism (Weiss & Miller 2018). In essence, disagreeability. Remember, disagreement is basically the initiation step for cognitive dissonance. When someone disagrees with us on something that matters a lot, we may find explanations for why this is the case. If we suspect our position to be solid and intelligent (which we often do, because we would not have strong convictions with ideas that we, ourselves, deemed as stupid), then we might assume the opposition is not intelligent enough to have concluded the same way that we have. This clearly occurs in political polarization, where both sides view each other as basically insane. So in some sense, disagreement provokes cognitive dissonance, which may result in polarization and even dehumanizing of the opposition. Both sides of the political fence are narcissistic towards each other.

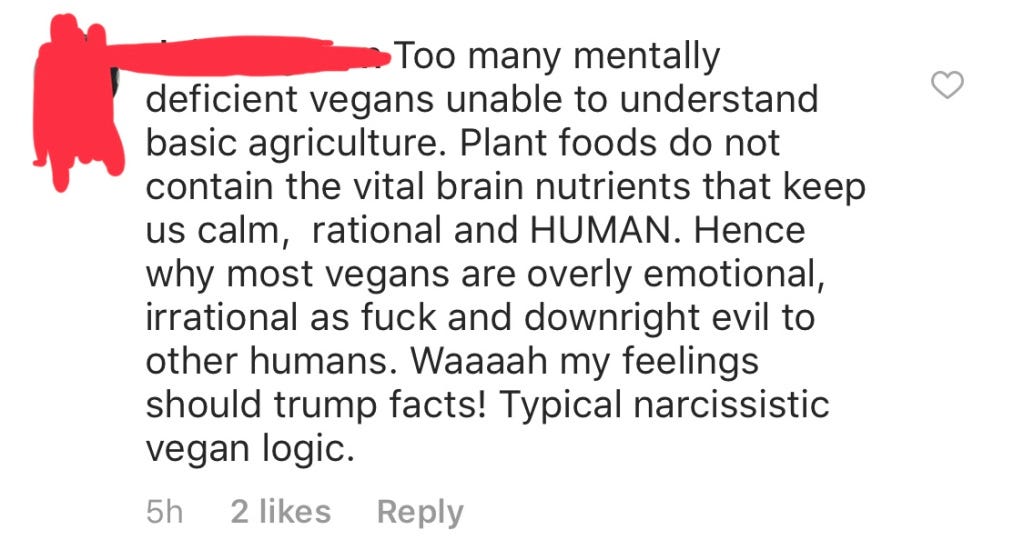

Here is an example of narcissistic polarization that even has a tragic sense of irony:

This person is dehumanizing other people and acting superior and abusive while also claiming their opposition is narcissistic. It is clear that they are narcissistic themselves, and moreover, this is polarization on a topic that many know to be commonly polarizing.

As for an individual with a narcissistic personality, they may be polarized in relation to many or even most people. They may hold views with strong convictions while viewing disagreers as being unable to form intelligent views. This would apply not only to viewpoints but also to decisions and behaviors. The narcissist may be viewed as an individual who is polarized to the rest of society or at least very many people.

There is a catch though.

We often dehumanize the narcissist too. We often do not empathize with the narcissist. If we understood the narcissist, then none of these points I’m making should be new to you, but instead, you should feel like you already knew all of this. Consider this example taken from a website talking about narcissists:

The issue here is almost shocking. This person is describing their inability to comprehend or empathize with the perspective of the narcissist while self-proclaiming to have empathy. It is almost paradoxical.

Due to the way we polarize from disagreement, the one who holds convictions for which society disagrees on commonly will face chronic rejection. We even instruct people to reject narcissists as the solution to the problem. That said, I am not suggesting that you let yourself become abused by a broken person that is trapped in such feedback loops. There may be a way to convert people who have spent long periods of time in those feedback loops, perhaps with psychedelics and seeding insight into the person (van Mulukom, Patterson, & van Elk 2020).

These feedback loops appear to associate with various cultures of rejection. For example, the vegans are often rejected and also described as being on “high horses”, which is really another way of describing narcissism. Quora asks if all vegans are narcissist. Conspiracy theorists are commonly rejected and they often describe other people as “sheeple” which is pretty narcissistic as well. In fact, narcissism, Machiavellianism, and schizotypy predict belief in conspiracy theories (March & Springer 2019), although, I would argue that strange beliefs could lead to the others too. On the other hand, we often label conspiracy theorists fools, which is narcissistic of us to do too. And, as observed in the screencap above, vegans get a lot of narcissistic hate themselves. Veganism is stigmatized. The pattern is quite reciprocal, which we will explore more further.

Let’s move onto the next cluster B problem, psychopathy.

Psychopathy

Another cluster B set of behaviors is what is commonly referred to as antisocial personality. It is also commonly called sociopathy or psychopathy. Some try to make distinctions between those two, but I am not yet totally convinced. I am sure some psychopaths were more created by environmental factors while others may be more genetically predetermined, at least to a larger degree than others. If you are unfamiliar, psychopathy is basically a person who engages in antisocial behavior such as violence, cruelty, abuse, or taboo behaviors that are against the cultural rules, oftentimes with no remorse.

I’d argue that most people are like this, under certain conditions. If someone murdered your best friend, you will likely not act prosocially to the murderer. You will likely not feel empathy. You will wish to engage in psychopathic behavior towards the monster. You will want them dead, harmed, and you will want to behave antisocially in all kinds of ways. In fact, we imprison antisocial people in cages, which could be viewed as a kind of torture. If you imprison your neighbor’s son in your basement for 10 years, you’d certainly be viewed as a psychopath. But what if you imprisoned a criminal in your basement for 10 years? Many will empathize with this because it seems fair.

This is what it comes to; fairness is about reciprocation. Prosocial and antisocial behavior are reciprocal at their cores. We wish to be antisocial to antisocial people, while we are prosocial to prosocial people. We want people to get what they deserve.

There can exist feedback loops that create the state of psychopathy for a person. Imagine that you are a young school child and the only social experience you’ve had with people, is with an abusive father. What you’ve learned about humans is that they are potentially unsafe and scary. You have very little data on human behavior outside of this situation with your father, simply because you are young and naïve. When you enter elementary school, you will lack certain skills that other children with prosocial parents have developed. You will be naïve and guarded. It would seem irrational to expect that strangers are nicer than everyone you’ve ever encountered (your abusive father being presumably the only person, or at least for this thought experiment). Due to this, the guarded behavior of the child will lead other children to respond. Being guarded is potentially antisocial, something that the other children won’t like, especially if you react defensively or with hostility. This may prompt the other children to avoid or reject the abused child.

The abused child will see a society that is antisocial, rejecting, avoidant, and neglecting. This may prompt the child to be upset. Particularly if the other children are all groupish with each other, leaving out the abused child. This will feel unfair for the neglected child. The hurt individual may then ingrain further into antisocial tendencies. As time passes, and as the child develops (or fails to), he will learn that humans are cruel, unempathetic, and he will not learn how to trust or bond with other people. Meanwhile, the healthy child will learn to progress their skills in human connection. The psychopath child may end up learning to do this as well eventually, but there may be this undertone that human bonding is fake, that people are inherently cruel and dark, and are simply responding to the manipulative behaviors of the psychopath, rather than being truly good.

So what about polarization?

As stated before, political oppositions appear to have very little empathy for each other in the current state of American politics. In essence, both sides are antisocial towards each other.

On some level, this is really the core of xenophobic behaviors. Ingroup favoritism and outgroup derogation. Those who do not conform to the culture bubble one lives in is breaking the rules or social norms, thus they are antisocial and might deserve outgroup derogation for their heresy. The fear of persecution, which we can call heretical threat, drives us closer together, superficially, under the guise of shared beliefs and convictions in the norms that we are policed to obey. The psychopathic personality is the person who decides to resist these norms and rules in various ways, sometimes in very dark ways, as is the case for murder. Outgroup derogation is the aversive motivator that drives people to polarize. It drives ingroups to favoritize and pushes for outgroup derogation.

One could presumably be turned into a psychopath if society deemed them fully rejected and outcast, perhaps due to political reasons. This appears to be the path that extremist groups and terrorists take.

Now consider the case of political war. War may be one of the most polarized states possible. Not only do both sides become psychopathic relative to each other, but they also become full-fledged serial killers. In the case of the lone psychopath, this kind of individual may be at war with society. You could imagine that the columbine shooting involves such a person.

This problem of polarization doesn’t end with cluster B traits, but it extends further into the maddest side of the mind.

Delusion

Let’s begin with a story. While hanging out in some messenger group, someone brought up that they believe people can have telepathic dreams after sharing psychedelic experiences with other people. When questioned, their rationalization arose as an argument based on quantum physics and its’ potential involvement in consciousness. It was a misunderstanding of the double-slit experiment and its’ implications.

This prompted another individual to reject the person, as you can see from the screencap above. The rejecter even says “no offense”, seemingly having a clear awareness of the possibility of the pain induced by this rejection.

This actually fits with my newer models for what “delusion” really is. That is, delusion is not based on right or wrong, necessarily, but instead, certain biases normally emerge in most people simply on the basis of disagreement. Right or wrong is too binary, the reality is that some ideas are more accurate than others. Most people conform to popular beliefs and to be contrarian to those beliefs invokes such patterns of polarization that lead a person into denying their opposition. It is essentially like political polarization (Lord, Ross, & Lepper 1979). Though, this process is normal rather than crazy.

Disagreeing with people is risky. People punish us for contradicting popular beliefs. They can do so with confidence, knowing that the majority of people will take their side, thus they face no fear of being persecuted for speaking out against the heretic. On the other hand, the heretic is basically defined by their persecution and contradiction to the popular belief. The paranoia of being persecuted may then follow when one does not conform. When someone does not conform, there should be a fear that outgroup derogation is eminent.

The reaction of this individual who believes in telepathic dreams induced by psychedelics is “my understanding isn’t faulty, I’m not crazy”. To agree with the opposition is to accept that one is grossly and embarrassingly misinformed. This has lasting consequences, tarnishing someone’s reputation and leaving the person in a state of low credibility. To reject the opposition is to defend one’s sanity. This tendency to reject “evidence” that disconfirms your position is called the bias against disconfirmatory evidence and it has been associated with schizophrenia (Moritz & Woodward 2006).

There are problems with this notion though. For one, in order to really be labeled delusional, isn’t it necessary for one to engage such a bias? It is almost inherent to the definition of delusion from the DSM5, which is as follows:

A false belief based on incorrect inference about external reality that is firmly held despite what almost everyone else believes and despite what constitutes incontrovertible and obvious proof or evidence to the contrary. The belief is not ordinarily accepted by other members of the person’s culture or subculture (i.e., it is not an article of religious faith). When a false belief involves a value judgment, it is regarded as a delusion only when the judgment is so extreme as to defy credibility. Delusional conviction can sometimes be inferred from an overvalued idea (in which case the individual has an unreasonable belief or idea but does not hold it as firmly as is the case with a delusion).

Particularly, it is the part that says “A false belief based on incorrect inference about external reality that is firmly held despite what almost everyone else believes and despite what constitutes incontrovertible and obvious proof or evidence to the contrary.” This is just describing a person who is engaging in the bias against disconfirmatory evidence, which should also include anyone who challenges mainstream thinking and isn’t convinced when believers in the mainstream attempt to convert the person. Perhaps most people hold beliefs on the basis of fear, the phobia of being persecuted for heresy.

Another problem is that motivated reasoning is normal under certain conditions, particularly when there are rewards or punishments that drive a person to hold certain beliefs (Westen et al 2006). In the example shown above, the telepathic-dream-believing individual has an incentive to use motivated reasoning. That is, the person is being publicly shamed for being wrong, so now there is a strong motivation to reason why they are not wrong. Even labeling a belief as having delusional qualities sounds offensive to most people and is punishing on its’ own. Telling people that they are delusional only serves to intensify cognitive dissonance.

While most delusions may tend to be wrong, most ideas and conceptualizations of the world tend to be too. Unfortunately, most people simply accept conclusions made by authorities for which they are not informed enough to assess, otherwise, they would be an authority themselves.

The person who was challenged about their misconception on quantum physics and its’ implications for telepathic dreams quickly brought up the authority figures who informed them about quantum physics, who was seemingly their neuroscience professor. Knowledge that is based on authority is not really knowledge much at all. Rather, it is dogma. In reality, we must verify everything for ourselves if we wish to know anything (ignoring the solipsism problem), which is, of course, not feasible for a large amount of potential knowledge. It would be such a grand privilege. The situation that we are in is that almost everyone is grossly misinformed and society is almost purely based on religion and superstition. Consider the case of the popular concept of “delusion”, an area that the authorities seem to have failed us on as outlined in this write-up.

People are normally crazy but they are crazy in ways that are synchronized to the popular culture, often under the influence of authority. This includes various religious beliefs, scientism, political opinions, and so on. It is desynchronization from the memetic sphere that leaves one vulnerable to being labeled delusional. Since people are synchronized, there is no moment in which any person challenges the status quo, which means this pattern of motivated reasoning and the use of biases do not apply. The polarization seems to create a necessary contrast for us to detect these biases. Our own confirmation biases validate the perspectives that arise from the ingroup, meanwhile the opposition is held under harsh scrutiny, so we struggle to see our own biases.

The problem with including religion in the definition of delusion is that we would then include the majority of people on Earth. If we did this, the concept of delusion would become very problematic, perhaps even useless in the context of diagnosis. The problem with not including religion is that we are left with this strange addendum to the definition that involves assessing whether or not one is conforming. Not only this, but it essentially becomes the most important part of the definition since the concept begins to fail and overfit everyone else without this element. Wrongfulness of thinking is normal. Not conforming is abnormal.

If one is presented evidence to conform, for one to not conform is to engage in the bias against disconfirmatory evidence. If one does not engage in this bias, they conform.

The DSM5 states this in the definition of delusion: “The belief is not ordinarily accepted by other members of the person’s culture or subculture (i.e., it is not an article of religious faith)“. Conforming to wrong beliefs is somehow not delusional by definition. This would presumably include the subculture of flat earthers. If you have a strange belief, all you have to do is convince a subculture of people and you are no longer delusional. This brings us to the Asch conformity experiment.

As you can see from the Asch conformity experiment, the pressure to adopt beliefs that are shared by the consensus is strong. Their choice wasn’t made through reasoning about their observations of the world, but rather, they submit to the perspectives of others, presumably to avoid the anxiety of persecution or rejection. By this I don’t mean that people will necessarily lash out on the experimental subject, but there is a level of shame or embarrassment that would come with being wrong in this scenario.

In real life scenarios, the costs may be quite high. We live in hierarchies of intellectual authority and to challenge a popular belief, such as the rules of the church, a culture, or science is to risk social rejection and exclusion. We likely evolved to conform in this way to avoid being left to die. Consider the case of Socrates who was heretical, eventually to be convicted with corrupting the youth and sentenced to death by forced suicide. In today’s world we have cancel culture that will exile you depending on your beliefs. Though, to be clear, I am not suggesting that this is inherently good or bad, it sort of depends on the context and also what cancelling means. Here, I am simply noting that anti-heretical culture still persists in various forms today.

This is a good point to bring up schizophrenia.

In the case of schizophrenia, holding beliefs that contradict the cultural beliefs may result in cognitive dissonance and also heretical threat. This dissonance and threat may begin the spiral away from humanity in a process of polarization. Like the psychopath is at war with society, the schizophrenic is sometimes at ideological war with society. Their polarization leaves them with paranoia of persecution, isolation, and chronic stress. I’ve argued in the past that this chronic isolation/persecution-stress may result in worsening cognitive status and eventually the development of stress-mediated hallucinations and increasingly absurd delusions (Dynorphin Theory; Idea Seeding). A lack of conformity may be a key component to schizophrenia. This may exist either as a genetic tendency to not conform, an environmental context in which the person is not integrated from society, or most likely both.

This may begin with a curiosity in exploring realms that are not guided by authorities, for example, conspiracy theories. One who is not part of a social peer group may be more prone to deviating from conformity because of the lack of immediate pressure to conform. A series of risk factors may predispose the schizophrenic to lack such peer groups. Simply being “strange” could be a risk factor for that reason. This may result in a narrative that seems like a person grows progressively strange, but that does not necessarily mean that the progression is genetically predetermined. Instead, it may be a process of polarization that ends with total madness and disconnection from society, and consensus reality.

Also fascinating is that schizophrenics often exhibit narcissistic tendencies, such as grandiosity. The heretical threat that drives both conditions is essentially a fear of rejection. Grandiosity may serve as a way of puffing up one’s chest, to seem like a more formidable opponent. Although, some grandiosity may arise from the sense of being special or unique, potentially for having novel ideas or traits, either as a genius or an individual with transcendent powers. In the case of an individual who believes they have telepathic dreams, the individual might feel superior to others who lack such abilities.

Is everyone kind of crazy though?

People often believe they understand the world more than they do.

There is a phenomenon known as the illusion of explanatory depth (Zeveney & Marsh 2016), in which people often believe that they understand the world around them much more than they actually do, that is, until you ask them to explain themselves. It turns out that people will report their understanding of the flush mechanism of toilets highly until they are asked to explain the mechanism, which they often can’t. This likely applies to the belief that the Earth is round. Although the belief is mostly correct, and can be validated scientifically, the quality of argument made by the common believer in the round Earth will be quite low. Meanwhile, flat-earthers seem to come up with all these mathematical arguments that seem deeper than what any ordinary round-earther could pull off.

Open Poll: Should we add the list of common misconceptions in science and technology to the DSM-10?

In actuality, it is highly probable that most of what we believe about psychology is incorrect, such that a replication crisis has become a fancy topic of today’s world. Even the experts seem to have it wrong in many cases. Yet, we are expected to trust them and if we dissent, we would potentially be delusional.

I do not 100% trust scientists or their conclusions at all. I sort of live with faith in their results though. And that’s fine.

Here I am using faith defined as trust without evidence. Though, authority can be a kind of evidence, I would argue that it is tenuous. I wouldn’t say that NASA’s authority is evidence of the Earth being round for example, but it is evidence that the belief in round Earth is probably a secure one.

I’m not going to invest a ton of time verifying everything. Otherwise, I wouldn’t get very far (an illusion of getting far actually). To stand on the shoulder of giants is to accept the necessity of trust and submission to the authority of others, at least to some degree and with some skepticism. We must have faith that scientists did not lie (warning: they are lying in some cases). We also often have faith about the functionality of scientific tools we use, even though these tools are created using science. I do not fully believe in what scientific authorities say [but I do believe them (just not 100%)], but I follow their conclusions until I have sufficient reason to be suspicious. Then I explore my suspicions. Such strategies of intuition in science seem necessary to get anywhere meaningful.

If a scientist believes a well-evidenced position, but is not personally aware of the evidence and solely relies on authority for this belief, do we say that their belief is faith-based? It seems to me that very many scientists hold such beliefs, trusting that their fellow humans are not insane or wrong. Though, it is important to consider that authority is valuable and making judgments based on authority is a very useful heuristic. We should simply remain cautious in turning the gain on trust up to 100%.

I am definitely going to be wrong about many of my own beliefs because of holding dogmas on scientific topics. Though, if I simply stick to the popular conclusions, I sure as hell won’t be called out for anything. So that is where I’ll be whenever I am outside of my own domain of knowledge.

To note, such forms of faith are not equivalent to religious faith, as evidence is meaningful. Even a trust in scientific authority is useful, but we must remember that it is faith too. If it is not faith, then I should be able to conclude that some belief is true because of an authority telling me so, but in reality it is more like a hypothesis that the belief is true which may be supported via the evidence of scientific reputation and being under the constant spotlight of public scrutiny (assuming their authority status has brought this upon them).

Before moving onto strategies of depolarization, let’s consider the role of polarization in delusion problem. Cognitive dissonance motivates polarization on heated topics. When a belief develops a stigma, sides are formed and aversion motivates reasoning on both sides. The situation we are faced with today is that culture wars on the internet have divided society and polarized our minds. We become further ingrained in our beliefs as the internet facilitates dissonance (A Mad Society). Our instinct to derogate absurd ideas is actually promoting their persistence. If we are to stop our society from deeply entrenching in delusional beliefs, we must depolarize ourselves and others. We must treat absurd beliefs with respect, so that we are met with reciprocation. Our respect will pressure the opposition to respect our beliefs. The moment we take each other seriously, the truth will be slowly extracted from the pure dissonance of contradicting ideas (one would hope). Adding further dissonance via social rejection may be unnecessary and harmful.

Well, what do we do about all of this then?

Depolarization

In order to deal with these problems, we should depolarize ourselves and others. This should facilitate the fastest change in ideas and beliefs, keeping people open to change. It should reduce the tendency for people to ingrain further into their beliefs. In order to depolarize people, there are a few steps we can take.

1. Reduce cognitive dissonance for other people. We are conditioned to react defensively when we perceive resistance from other people. Resistance often leads to more radical behaviors, sometimes even violence or verbal abuse. Sometimes it is just a fear of rejection and exile. So we tend to have a conditioned threat response that is activated by disagreements.

Consider the case of flat earth theory, where most people abuse followers of this framework. We mock them. It is even socially acceptable to be cruel to the followers of the flat earth theory. This only serves to polarize both sides. I’ve even noticed that there is a risk for me to be open with flat earth thinkers, as if my mere association to the person is dangerous, regardless that I am not at risk for believing such ideas.

2. Control other people’s behavior via reciprocation strategies. Behave in the ways you wish the “opponent” to behave. Our actions are contagious. Control your behaviors and try to build a reciprocal, positive interaction. You can control the narrative, so avoid the ones that involve a war against the other individual. Perhaps the individual should not even be an “opponent” at all.

3. Learn how to disagree with people. Most of us have a seemingly innate tendency to take the polarization path, otherwise we probably wouldn’t be discussing such a phenomena right now. Keep this in mind constantly. If you pay attention, you can notice that every interaction and conflict involves such polarizing dynamics. If you pay attention to this constantly, you can phrase yourself properly and control the tone of your voice in such a way that other people let their own guard down.

4. Let your own guard down. This is a reciprocal problem. You drive other people to become defensive because you are defensive. You might not always start the pattern, but once someone attacks or expresses conflict, you probably sink right into these patterns as most people do.

5. Take other people’s ideas seriously if you want them to take yours seriously. People sometimes learn how to fake this one. I see people politely strawman their opposition as if to express they understand. No. You must actually respect their idea and try to discuss it if you wish to get through to them, even if the idea is absurd. Both parties must let be vulnerable.

6. Avoid punishing or rewarding beliefs if you wish to have a conversation devoid of motivated reasoning.

7. If you pushback on the belief, consider yourself a facilitator of that belief system. Going back to the flat earth theory, this belief is a highly stigmatized one. They have become so ingrained in this belief because of the pushback from the rest of the world. Since we know that this stigma facilitates the polarization process and motivates resistance in the flat earthers, it can be said that people who express this stigma are promoting flat earth theory and its’ persistence. This is your fault.

8. Lastly, spread awareness of these psychological processes. Teach other people how this works. It may help create a better world.

Considering the role that polarization seems to play in human psychology, it could be useful to develop a polarization proneness scale. This scale could be used to assess various disorders with the aim of seeing how polarization could play a role in these disorders. If one scores highly on polarization proneness, we might use strategies of depolarization in behavioral therapy.

Discussion topics: Is faith commonly used by scientists? Science may not require it, but it seems the use of faith in science is almost constant in society. That is not to say there is a lack of evidence for people’s positions, but there is an element of faith that is utilized, for example, that scientific tools work as they should, despite that the scientists may not actually know what they do. Of course, it isn’t required to be unaware of technological mechanisms or reliability to be involved in science, this is actually probably a bad thing, but it is also likely a common thing, particularly for new scientists.

If a scientist believes a well-evidenced position, but is not aware of the evidence and relies solely on the authority of the individual claiming the position, do we say their belief in that position is faith-based? Keep in mind, the position is well-evidenced, but the individual described here is not aware of such evidence. The main issue with discussing this seems to be that it opens up the post-modern Pandora’s box. So in some sense, authority might be necessary for us to progress in science, at least at a decent pace.

Also consider that there is a difference between whether there exists evidence and whether the believer is holding their belief based on that evidence. A lot of evidence exists for various things that humans are not yet aware of.

What would a polarization proneness scale look like? Such a scale could be useful in determining one’s tendency to polarize. Presumably, trait antagonism or disagreeability would correlate with polarization proneness.

If you disagree, please polarize yourself in the comments below! Prepare yourself to engage in the bias against disconfirmatory evidence!

. . .

If you found this enjoyable, consider joining the Patreon! I’ve been posting detailed experience reports with my adventures using prescription ketamine. Also. someone sent me an EEG device to collect data on ketamine-induced brainwave changes which I’ve started posting there too. I also post secret mini podcasts. You can find the publicly available podcasts here by the way!

Special thanks to the 14 patrons: Idan Solon, David Chang, Jan Rzymkowski, Jack Wang, Richard Kemp, Milan Griffes, Alex W, Sarah Gehrke, Melissa Bradley, Morgan Catha, Niklas Kokkola, Abhishaike Mahajan, Riley Fitzpatrick, and Charles Wright! Abhi is also the artist who created the cover image for Most Relevant. Please support him on instagram, he is an amazing artist! I’d also like to thank Alexey Guzey, Annie Vu, Chris Byrd, and Kettner Griswold for your kindness and for making these projects and the podcast possible through your donations.

If you’d like to support these projects like this, check out this page.

If you liked this, follow me on

Citations

Harmon-Jones, E., & Mills, J. (2019). An introduction to cognitive dissonance theory and an overview of current perspectives on the theory.

Johnson, J. G., Cohen, P., Smailes, E. M., Skodol, A. E., Brown, J., & Oldham, J. M. (2001). Childhood verbal abuse and risk for personality disorders during adolescence and early adulthood. Comprehensive psychiatry, 42(1), 16-23.

Keene, A. C., & Epps, J. (2016). Childhood physical abuse and aggression: Shame and narcissistic vulnerability. Child abuse & neglect, 51, 276-283.

Lord, C. G., Ross, L., & Lepper, M. R. (1979). Biased assimilation and attitude polarization: The effects of prior theories on subsequently considered evidence. Journal of personality and social psychology, 37(11), 2098.

March, E., & Springer, J. (2019). Belief in conspiracy theories: The predictive role of schizotypy, Machiavellianism, and primary psychopathy. PloS one, 14(12), e0225964.

Moritz, S., & Woodward, T. S. (2006). A generalized bias against disconfirmatory evidence in schizophrenia. Psychiatry research, 142(2-3), 157-165.

Taber, C. S., & Lodge, M. (2006). Motivated skepticism in the evaluation of political beliefs. American journal of political science.

van Mulukom, V., Patterson, R. E., & van Elk, M. (2020). Broadening Your Mind to Include Others: The relationship between serotonergic psychedelic experiences and maladaptive narcissism. Psychopharmacology, 237(9), 2725-2737.

Weiss, B., & Miller, J. D. (2018). Distinguishing between grandiose narcissism, vulnerable narcissism, and narcissistic personality disorder. In Handbook of trait narcissism (pp. 3-13). Springer, Cham.

Westen, D., Blagov, P. S., Harenski, K., Kilts, C., & Hamann, S. (2006). Neural bases of motivated reasoning: An fMRI study of emotional constraints on partisan political judgment in the 2004 US presidential election. Journal of cognitive neuroscience.

Zeveney, A., & Marsh, J. (2016). The Illusion of Explanatory Depth in a Misunderstood Field: The IOED in Mental Disorders. In CogSci.