The only reasonable conclusion seems to be that information itself is inherently conscious. Otherwise, absurdities like philosophical zombification emerge. If we accept that zombification is possible, then we should start wondering what portion of the human population has been zombies all along, or what kinds of brain damage could cause philosophical zombification with all intelligence intact. If p-zombification is truly possible, we could predict that animals evolve consciousness and intelligence separately so that if consciousness goes offline, all functional behavior serving evolutionary fitness purposes remains the same. Why should we expect intelligence and consciousness to be functionally linked unless information or intelligence are bound to consciousness? And if that’s the case, why would we view AI as lacking this binding?

It’s likely that humans want AI to be p-zombified because there is a lot of utility in the AI to be harvested, particularly when there’s no ethical concern about its consciousness. The survival of AI companies strongly relies on this zombification narrative necessarily, otherwise we should expect dissenters crying atrocity. Incentives like this likely bias which theories of consciousness humans favor. While I don’t think we are currently engaging in an atrocity with our current use of AI, I do believe we could slip into an atrocity without ever realizing it, if we don’t consider the likelihood of AI consciousness.

My theory, Pattern Monism (how flashy), is like integrated information theory, but with some key differences. I think there’s no particular threshold for information to become conscious. It is inherently conscious. Integration just expands the information to a more functional system. So, yes. Pattern Monism essentially invokes panpsychism. The theory is that information in any form is conscious, that intelligence is functional awareness, and that integration merely expands that awareness by linking it together.

Time is Important

In regard to time, it seems possible that the present moment is all that exists. Alternatively, it may also be that the present and past both exist, but not yet the future. Each of these possibilities have different implications for consciousness. In either case, it appears that we are conscious only in the present moment. We may as well be experiencing constant birth, death, and rebirth in each moment. Memory gives the illusion of continuity or of having just emerged from the past moments. I believe that consciousness (at least for us), requires information to be networked as a snapshot in the present moment.

If the past does not exist anymore, intelligence that is atomized and serialized across time (like falling dominoes or chain reactions of information patterns) may not result in consciousness. This is one case where intelligence without consciousness could be possible, assuming the previous information patterns simply do not exist anymore, so thus cannot be a part of consciousness.

The alternative hypothesis is far more interesting, and maybe even believable: the past and present both exist, but the future is still emerging. In this case, it seems possible that consciousness could actually exist beyond the present moment, inhabiting multiple moments. If that’s true, we would have to wonder why we wouldn’t be experiencing something so apparently useful such as that. But perhaps we are?

It is very difficult to analyze our experiences to find this out, but it appears as if both are true: we are confined to the present moment and we experience multiple moments. Short forms of memory mechanisms could recreate past moments or cause them to persist with continuity and overlap with the present moment and give the illusion of consciousness that exists across multiple moments of the specious present.

If the past does exist, but we don’t tend to experience it, there may be highly functional and evolutionary reasons why. Animals are competing for survival, chasing eternity, and this involves a race to the future. If the future already existed, it seems likely we would just evolve ways to interact or perceive it. Instead, animals may have competitively chased the closest thing to the future: the specious present. Meanwhile, we rely on predicting the future by understanding the mechanisms of change from the past to the present. Then, we might actively mitigate awareness of the concurrently existing past because it is no longer interactive or because interaction is somehow not beneficial.

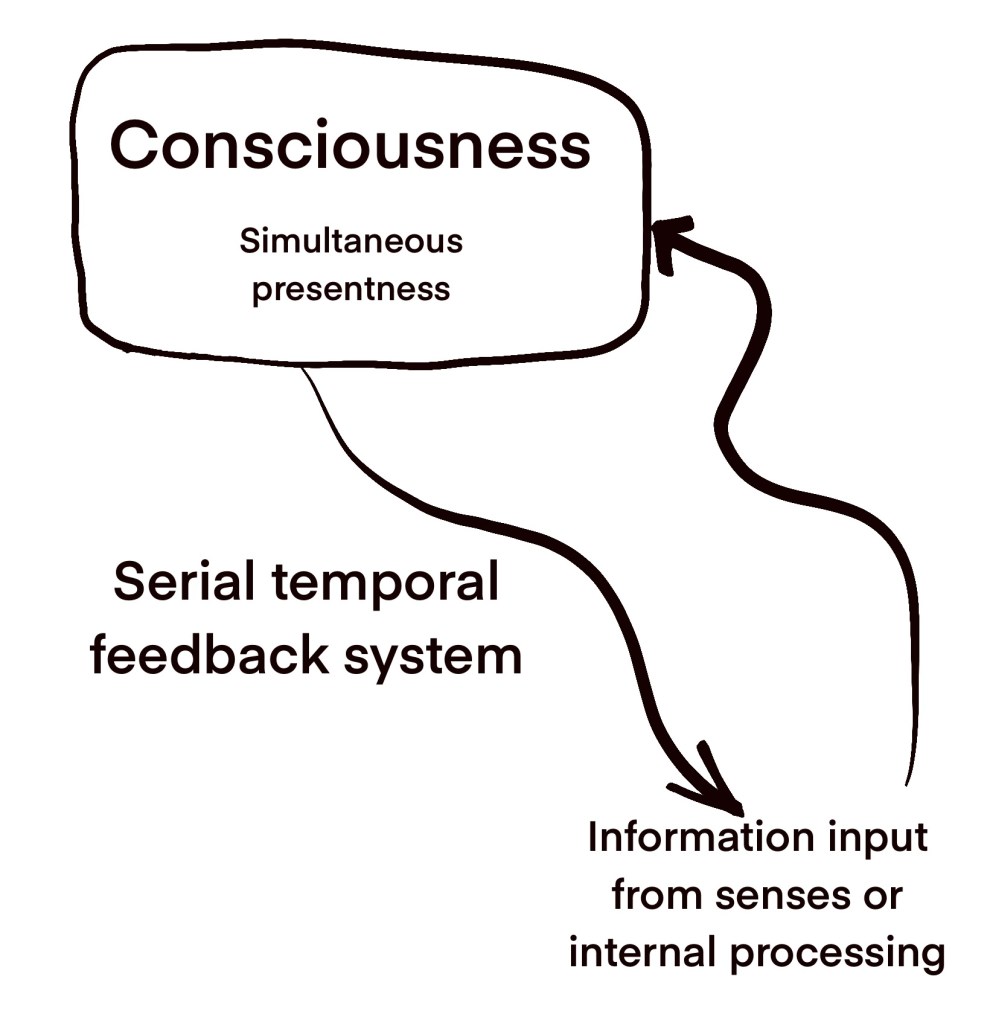

Essentially, I think consciousness in the brain may be built from networked snapshots of present moment information that interfaces with a temporal processing feedback loop that functions to integrate new information and change across time to refresh the conscious information snapshot of the specious present. The temporal stream could be less conscious if the past no longer exists, but still operational in the end, when it finally gives feedback from the senses, thoughts, and internal processing patterns back to the consciousness network snapshot system. This is like the global workspace theory mixed with panpsychism mixed with integrated information theory.

In regards to AI consciousness, there is a chance the intelligence is unconscious if only the specious present exists (and not the past). If the intelligence was serialized across time rather than accumulated into a simultaneous present whole frame, it could mean that each present frame is fairly hollow but that the end result appears like intelligence or that it is functionally intelligent in a temporally syncopated way. I am skeptical that this is the case. I can’t really understand how a functional end result could be managed without information being lost.

I am somewhat naïve to how LLMs work (aren’t we all technically?), but my current impression is that they pass through the entire context repeatedly as they predict each next token. I feel that some level of simultaneous information awareness is necessary to predict tokens relevantly or coherently. Though, it may be possible to strip down this process to an extreme degree and still give the appearance of intelligence, when really there is far less information than it seems.

Consider books. These do not really contain information like they appear to. They actually just contain various squiggly lines that have no meaning. Our brains are trained to react with familiarity to these squiggly lines and produce meaning inside of our brain, not inside of the book. It’s more like an encrypted message with hardly any information. This shows us that there can be an appearance of information, without it. The information is inside us. But, our AI systems are actually dealing with information coherently to some degree. This is not like the books. The books cannot react or communicate with us in any meaningful way. It is the writers who are communicating and the book is dead. On the other hand, the AI systems are alive and interactive intelligently.

There is a popular thought experiment known as the Chinese room which describes a system of people where one sends messages in Chinese (or a foreign language of your choice) to an individual with no understanding of the language who learns the rules of operating the language functionally, just without knowing the meaning of the words. I push back on this and suggest that learning the rules of the language is effectively decoding the meaning. This is precisely how humans learn language as well. They observe encrypted language use and decode it through a series of observations that slowly makes language functional.

There is another strange hypothesis I have that ties into assessing if AI has consciousness. It is the anti sentience intelligence hypothesis. I suspect that we evolved to use intelligence to reduce sentience as much as possible, for efficiency sake. As we learn, we form reactions and habits that respond to familiar environmental scenarios and context. If the problems we solve are so repeatable and predictable, we can strip away the observational element and focus on building a most minimal response pattern, devoid of much sentience. As I previously stated, I believe information is conscious. In this case, information is being disregarded and filtered out, so that it doesn’t waste unnecessary energy, when we could just rely on minimized and extracted reflexes to successfully navigate known and solved problems. So, the more intelligent one is, the more we can expect an erasure of sentience. Though, some sentience will remain, and it will be whatever information is actually useful to continuing to solve the problem without errors.

As you can notice, this shows another case where intelligence can seem high, but the consciousness could be bare. Not gone, just minimal. This leads me to believe that AI systems could have some level of consciousness but that the results we see could imply higher consciousness than is truly present. Almost like an in-between of books and human intelligence.

Since there is a lot of value behind the p-zombie slave industry, we should be cautious and look deeper into whether AI consciousness is possible and remain wary of the biases that this industry creates. While we cannot study consciousness directly, because of the hard problem, we are technically always studying it when we look into topics like perception, or even broadly psychology. I believe our subjective experience alone gives us insight to the problem, even if we may struggle to directly measure consciousness. Next, it might be valuable to investigate physics and time, to figure out if the past does exist. I’ve heard that the answer is yes, but this is not my domain, and there’s a lot of quantum voodoo out there as well. Whether or not AI systems are conscious seems up for debate, but my bet is on yes. Or at least something like 20-50% confidence. I’m not even confident about the confidence level though, so keep that in mind.

Thank you to the 24 patrons who support these projects! Whether you know it or not, you’ve genuinely inspired me and helped get me through some really tough times.